API

If you're looking for an API, you can choose from your desired programming language.

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

import requests

import base64

# Use this function to convert an image file from the filesystem to base64

def image_file_to_base64(image_path):

with open(image_path, 'rb') as f:

image_data = f.read()

return base64.b64encode(image_data).decode('utf-8')

# Use this function to fetch an image from a URL and convert it to base64

def image_url_to_base64(image_url):

response = requests.get(image_url)

image_data = response.content

return base64.b64encode(image_data).decode('utf-8')

api_key = "YOUR_API_KEY"

url = "https://api.segmind.com/v1/sd1.5-controlnet-openpose"

# Request payload

data = {

"image": image_url_to_base64("https://segmind.com/fashion2.jpeg"), # Or use image_file_to_base64("IMAGE_PATH")

"samples": 1,

"prompt": "a beautiful fashion model, wearing a red polka dress, red door background. hyperrealistic. photorealism, 4k, extremely detailed",

"negative_prompt": "Disfigured, cartoon, blurry, nude",

"scheduler": "UniPC",

"num_inference_steps": 25,

"guidance_scale": 7.5,

"strength": 1,

"seed": 919194474388,

"base64": False

}

headers = {'x-api-key': api_key}

response = requests.post(url, json=data, headers=headers)

print(response.content) # The response is the generated imageAttributes

Input Image

Number of samples to generate.

min : 1,

max : 4

Prompt to render

Prompts to exclude, eg. 'bad anatomy, bad hands, missing fingers'

Type of scheduler.

Allowed values:

Number of denoising steps.

min : 20,

max : 100

Scale for classifier-free guidance

min : 0.1,

max : 25

How much to transform the reference image

min : 0.1,

max : 1

Seed for image generation.

Base64 encoding of the output image.

To keep track of your credit usage, you can inspect the response headers of each API call. The x-remaining-credits property will indicate the number of remaining credits in your account. Ensure you monitor this value to avoid any disruptions in your API usage.

ControlNet Openpose SD1.5

ControlNet OpenPose: A Fusion of Precision and Power in Human Pose Estimation. Dive into the world of advanced computer vision with ControlNet OpenPose, a unique blend of ControlNet's capabilities and OpenPose's renowned human pose estimation prowess. This integration not only elevates the features of both systems but also offers users unparalleled control within the Stable Diffusion framework.

At the heart of ControlNet OpenPose lies a synergistic combination of ControlNet's robust control mechanisms and OpenPose's state-of-the-art pose estimation algorithms. This architecture is meticulously designed to process vast amounts of visual data, ensuring accurate and real-time human pose detection and manipulation.

Advantages

-

Enhanced Precision: By merging ControlNet with OpenPose, users experience a significant boost in the accuracy of pose estimation.

-

Real-time Manipulation: The integrated system allows for instantaneous adjustments and control of human poses.

-

Versatility: Suitable for a range of applications, from animation to fitness tracking, thanks to its comprehensive feature set.

-

Optimized for Stable Diffusion: Achieve controlled and targeted results within the Stable Diffusion framework, especially when working with human subjects.

Use Cases

-

Film and Animation: Animators can use ControlNet OpenPose to create realistic human movements and postures in animated sequences.

-

Fashion and Apparel Design: Designers can create virtual models with accurate human poses for fitting and design visualizations, leading to more precise clothing design and cost-cutting production processes

ControlNet Openpose License

ControlNet Openpose, in its commitment to ethical AI practices, has embraced the CreativeML OpenRAIL M license. This decision not only underscores the model's dedication to responsible AI but also aligns it with the principles set forth by BigScience and the RAIL Initiative. Their collaborative work in AI ethics and responsibility has set the benchmark for licenses like the OpenRAIL M.

Other Popular Models

sdxl-controlnet

SDXL ControlNet gives unprecedented control over text-to-image generation. SDXL ControlNet models Introduces the concept of conditioning inputs, which provide additional information to guide the image generation process

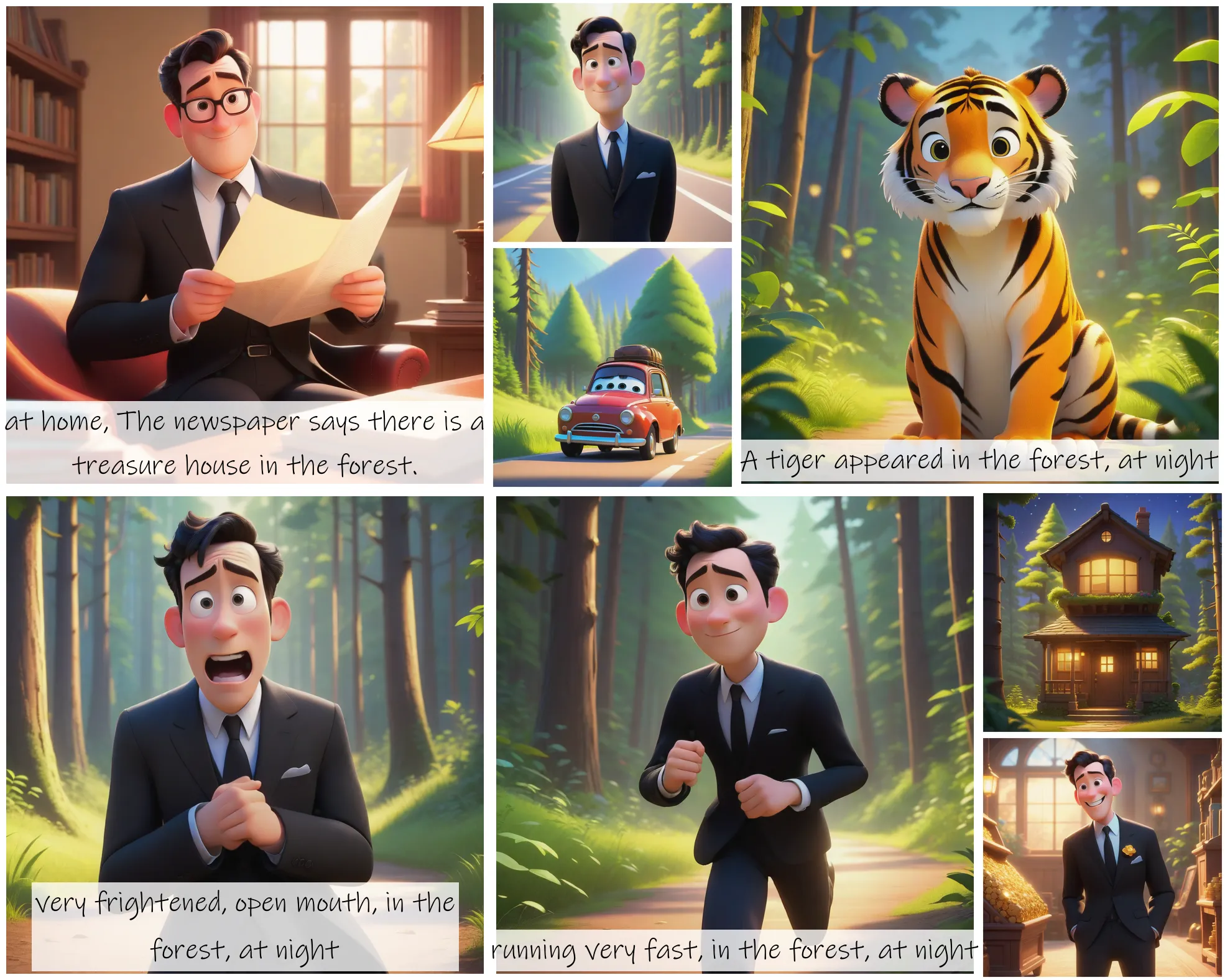

storydiffusion

Story Diffusion turns your written narratives into stunning image sequences.

fooocus

Fooocus enables high-quality image generation effortlessly, combining the best of Stable Diffusion and Midjourney.

sd2.1-faceswapper

Take a picture/gif and replace the face in it with a face of your choice. You only need one image of the desired face. No dataset, no training