API

If you're looking for an API, you can choose from your desired programming language.

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

import requests

import base64

# Use this function to convert an image file from the filesystem to base64

def image_file_to_base64(image_path):

with open(image_path, 'rb') as f:

image_data = f.read()

return base64.b64encode(image_data).decode('utf-8')

# Use this function to fetch an image from a URL and convert it to base64

def image_url_to_base64(image_url):

response = requests.get(image_url)

image_data = response.content

return base64.b64encode(image_data).decode('utf-8')

# Use this function to convert a list of image URLs to base64

def image_urls_to_base64(image_urls):

return [image_url_to_base64(url) for url in image_urls]

api_key = "YOUR_API_KEY"

url = "https://api.segmind.com/v1/sd1.5-controlnet-scribble"

# Request payload

data = {

"image": image_url_to_base64("https://segmind.com/scribble-input.jpeg"), # Or use image_file_to_base64("IMAGE_PATH")

"samples": 1,

"prompt": "steampunk underwater helmet, dark ocean background",

"negative_prompt": "Disfigured, cartoon, blurry, nude",

"scheduler": "UniPC",

"num_inference_steps": 25,

"guidance_scale": 7.5,

"strength": 1,

"seed": 85497675147333,

"base64": False

}

headers = {'x-api-key': api_key}

response = requests.post(url, json=data, headers=headers)

print(response.content) # The response is the generated imageAttributes

Input Image

Number of samples to generate.

min : 1,

max : 4

Prompt to render

Prompts to exclude, eg. 'bad anatomy, bad hands, missing fingers'

Type of scheduler.

Allowed values:

Number of denoising steps.

min : 20,

max : 100

Scale for classifier-free guidance

min : 0.1,

max : 25

How much to transform the reference image

min : 0.1,

max : 1

Seed for image generation.

Base64 encoding of the output image.

To keep track of your credit usage, you can inspect the response headers of each API call. The x-remaining-credits property will indicate the number of remaining credits in your account. Ensure you monitor this value to avoid any disruptions in your API usage.

ControlNet Scribble

ControlNet Scribble model, revolutionizes image generation through manual annotations. This innovative technique empowers users to directly influence the generation process by simply scribbling or marking desired modifications on the image, bridging the gap between user intent and AI capabilities.

At the heart of ControlNet Scribble lies a sophisticated mechanism that interprets user annotations as directives for image generation. By integrating these manual markings, the model comprehends and incorporates user preferences, ensuring that the generated output aligns closely with the user's vision.

Advantages

-

User-Directed Generation: Provides users with the autonomy to guide the image generation process through direct annotations.

-

Fine-Grained Control: Offers unparalleled precision, allowing users to influence specific aspects of the generated image.

-

Tailored Outputs: Ensures that the generated images resonate with user preferences, capturing the essence of their desired modifications.

Use Cases

-

Customized Image Editing: Ideal for users who wish to make specific edits or modifications to their images.

-

Artistic Creations: Artists can harness the scribble preprocessors to infuse hand-drawn authenticity into digital artworks.

-

Interactive Design: Designers can iteratively shape their designs, making real-time adjustments through scribbles.

-

Educational Tools: Can be integrated into learning platforms, allowing students to interactively engage with image-based content.

ControlNet Scribble License

ControlNet Scribble, in its commitment to ethical AI practices, has embraced the CreativeML OpenRAIL M license. This decision not only underscores the model's dedication to responsible AI but also aligns it with the principles set forth by BigScience and the RAIL Initiative. Their collaborative work in AI ethics and responsibility has set the benchmark for licenses like the OpenRAIL M.

Other Popular Models

sdxl-controlnet

SDXL ControlNet gives unprecedented control over text-to-image generation. SDXL ControlNet models Introduces the concept of conditioning inputs, which provide additional information to guide the image generation process

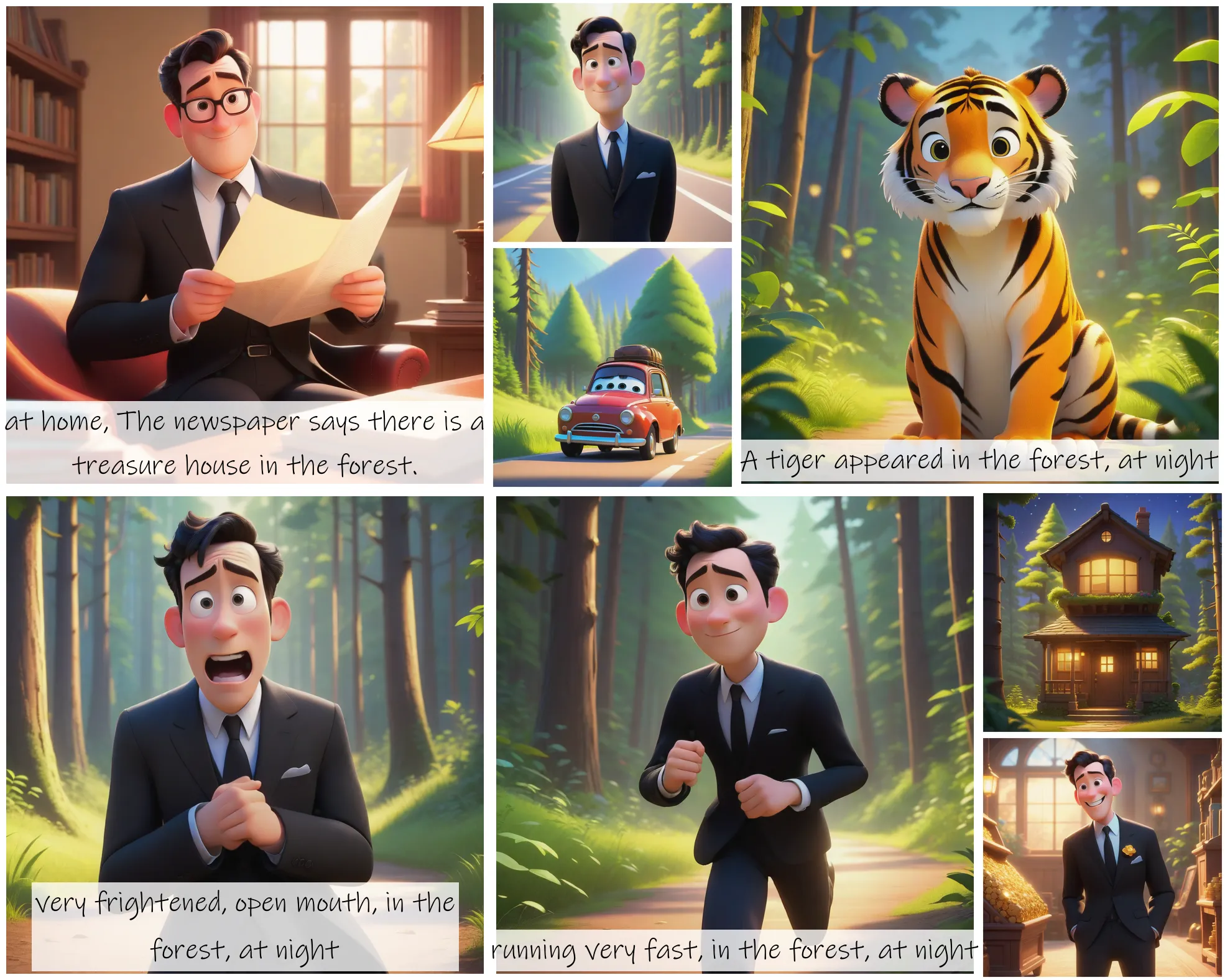

storydiffusion

Story Diffusion turns your written narratives into stunning image sequences.

idm-vton

Best-in-class clothing virtual try on in the wild

sd2.1-faceswapper

Take a picture/gif and replace the face in it with a face of your choice. You only need one image of the desired face. No dataset, no training