Stable Diffusion 3 (SD3) Pose ControlNet

Stable Diffusion 3 (SD3) Pose ControlNet is a sophisticated deep learning model tailored for generating images based on text prompts while using pose information as guidance. By interpreting human poses provided through input images, SD3 Pose ControlNet can accurately align generated images with specific poses, providing enhanced control and precision in image generation tasks.

How to Use the Model?

-

Input Prompts: Provide a textual description of the desired image in the "Prompt" field.

-

Input Image: Upload an image to guide the generation process.

-

Negative Prompts: Indicate elements to exclude from the generation.

-

Inference Steps: Set the number of steps for the model to refine the image. More steps typically result in higher quality.

-

Strength: Adjust this parameter to determine the influence of the input image on the final output. Higher values make the generated image adhere more closely to the input pose.

-

Seed: Define a seed value for reproducibility. Randomly generate seeds if consistency is not required.

-

Guidance Scale: Adjusts how closely the generated image follows the prompt. Higher values ensure the image aligns closely with the prompt.

How to Fine-Tune Outputs?

Fine-tuning the outputs can be achieved by adjusting several parameters:

-

Inference Steps: Increasing the number of steps (e.g., from 20 to 50) can generate finer details but at the cost of longer processing times.

-

Strength: Adjust the strength to control the influence of the input image. For minor adjustments, vary between 0.6 to 0.9. Lower values provide more creative freedom to the model.

-

Guidance Scale: Typically between 7 and 15. Use higher values for strict adherence to prompts and lower values for more abstract results.

-

Sampler: Different samplers (e.g., ddim, p_sampler) can affect the generation style and speed. Experiment with these to find the optimal balance for your use case.

Use Cases

SD3 Medium Pose ControlNet is versatile and can be applied to numerous scenarios:

-

Character Design: Generate characters in specific poses for games, animation, or artwork.

-

Marketing and Advertising: Create posed images that align with marketing campaigns and product placements.

-

Educational Materials: Develop educational visuals that require accurate representation of human poses.

-

Entertainment: Produce scenes and illustrations requiring specific postures, enhancing storytelling and scene composition.

Other Popular Models

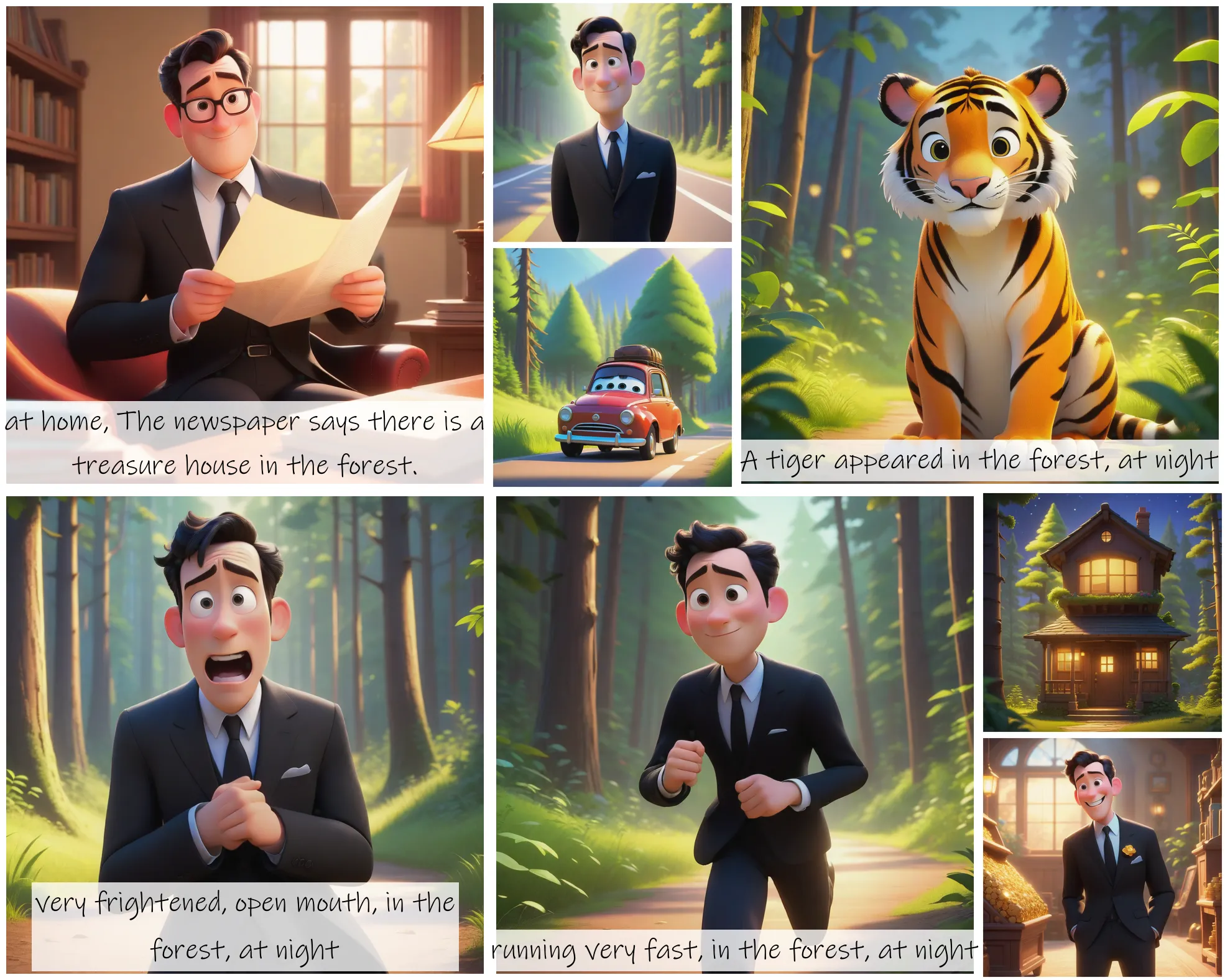

storydiffusion

Story Diffusion turns your written narratives into stunning image sequences.

idm-vton

Best-in-class clothing virtual try on in the wild

faceswap-v2

Take a picture/gif and replace the face in it with a face of your choice. You only need one image of the desired face. No dataset, no training

sd2.1-faceswapper

Take a picture/gif and replace the face in it with a face of your choice. You only need one image of the desired face. No dataset, no training