Text Embedding 3 Small

Text-embedding-3-small is a compact and efficient model developed for generating high-quality text embeddings. These embeddings are numerical representations of text data, enabling a variety of natural language processing (NLP) tasks such as semantic search, clustering, and text classification

Text Embedding 3 Small

Text-embedding-3-small is a compact and efficient model developed for generating high-quality text embeddings. These embeddings are numerical representations of text data, enabling a variety of natural language processing (NLP) tasks such as semantic search, clustering, and text classification. The smaller model size allows for faster processing and lower resource consumption while maintaining robust performance.

How to Fine-Tune Outputs?

Input Text Length: Balance text length according to the specific task requirements. Short texts may not capture enough context, while very long texts might need truncation or summarization strategies.

Use Cases

Text-embedding-3-small is versatile and can be deployed in numerous NLP applications:

-

Semantic Search: Enhance search engines by leveraging embeddings to measure similarity between user queries and documents.

-

Text Classification: Use embeddings as input features for training machine learning models in various classification tasks.

-

Clustering and Topic Modeling: Employ clustering algorithms on embeddings to identify topics or group similar texts in a corpus.

-

Recommendation Systems: Improve recommendation accuracy by computing and comparing embeddings of user queries and item descriptions.

Other Popular Models

sdxl-controlnet

SDXL ControlNet gives unprecedented control over text-to-image generation. SDXL ControlNet models Introduces the concept of conditioning inputs, which provide additional information to guide the image generation process

storydiffusion

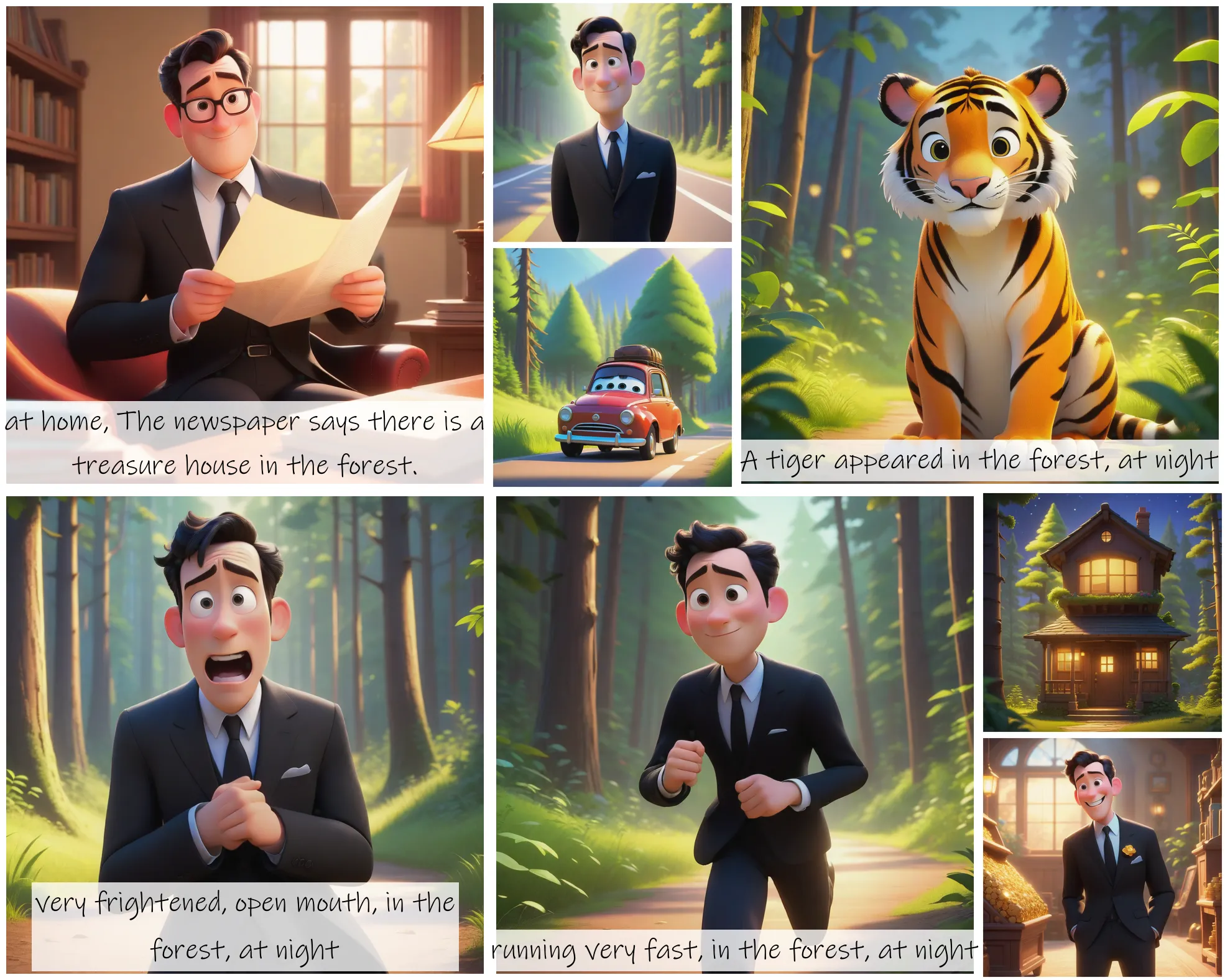

Story Diffusion turns your written narratives into stunning image sequences.

codeformer

CodeFormer is a robust face restoration algorithm for old photos or AI-generated faces.

sd2.1-faceswapper

Take a picture/gif and replace the face in it with a face of your choice. You only need one image of the desired face. No dataset, no training